It can be inferred that your data is perfect fit if the value of RSS is equal to zero. It is actually the sum of the square of the vertical deviations from each data point to the fitting regression line. Residual Sum of Squares is usually abbreviated to RSS. The key linear fit statistics are summarized in the Statistics table, like what is shown below: Note that you should include restrictions to make sure that the fitting model is meaningful, which you can refer to this section. In other words, the function is over-parameterized and the parameter may be redundant. For example, if some dependency values are close to 1, this could mean that there is mutual dependency between those parameters. The dependency value, which is computed from the variance-covariance matrix, typically indicates the significance of the parameter in your model. For example, in the above image of Parameters table, we are 95% sure that the true value of offset(y0) is between 4.16764 and 6.51631, the true values of center(x0) is between 24.73246 and 25.08134, and the true values of width(w) is between 9.75801 and 10.58138. UCL and LCL, upper and lower confidence intervals of parameter, indicate how likely the interval is to contain the true value. LCL and UCL (Parameter Confidence Interval) If Prob>|t| |t|, the more unlikely the parameter is equal to zero. However, the Prob>|t| is easier to interpret, and we recommend that you ignore t-Value and judge by Prob>|t|. If the t-value is larger than the critical t-value ( ), it can be said that there is a significant difference. T-Value = Fitted value/Standard Error, for example the t-Value for y0 is 5.34198/0.58341 = 9.15655.įor this statistical t-value, it usually compares with a critical t-value of a given confident level (usually be 5%). We add higher order terms until a t-test for the newly-added term suggests that it is insignificant. For example, in polynomial regression, we can use it to determine the proper order of the polynomial model. The t-test can also be used as a detection tool. So if the null hypothesis is not rejected, the corresponding predictor will be viewed as insignificant, which means that it has little to do with the response. The null hypothesis for a parameter's t-test is that this parameter is equal to zero. Is every term in the regression model significant? Or does every predictor contribute to the response? The t-tests for coefficients answer these kinds of questions. If the standard error values are much greater than the fitted values, the fitting model may be overparameterized. Typically, the magnitude of the standard error values should be lower than the fitted values.

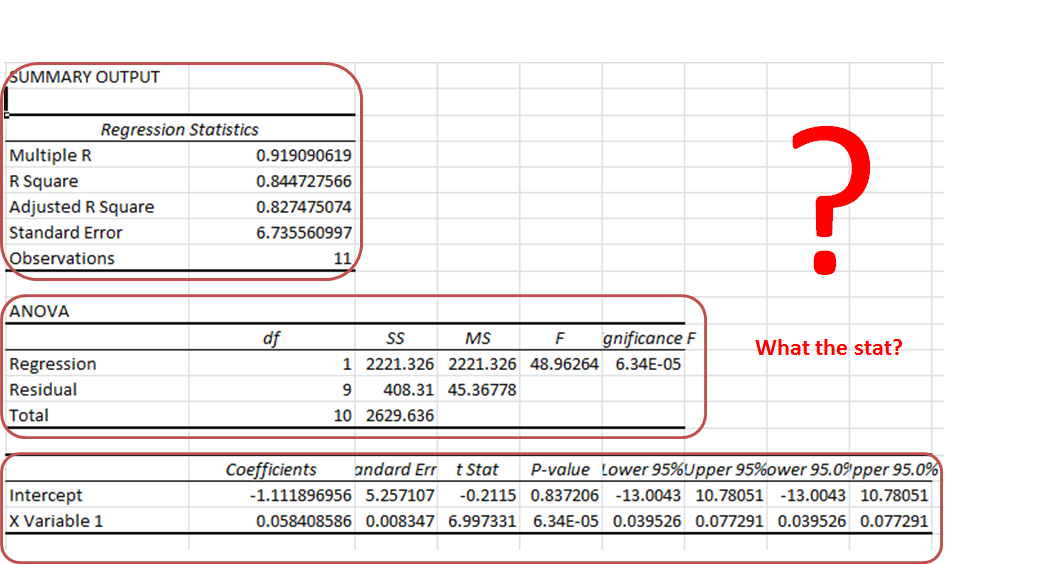

The parameter standard errors can give us an idea of the precision of the fitted values. The fitted values are reported in the Parameters table, like what is shown below:Įstimated values for each parameter of the best fit which would make the curve closest to the data points. Notes: To learn more about the algorithm and equations of these statistics, see Theory of Nonlinear Curve Fitting In this article, we explain how to interpret the imporant regressin reslts quickly and easily If you perform a regression analysis, you will generate an analysis report sheet listing the regression results of the model. Regression is used frequently to calculate the line of best fit. 2.2 Scale Error with sqrt(Reduced Chi-Sqr).1.5 LCL and UCL (Parameter Confidence Interval).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed